The Live2D Student Discount Program

How-to Guide

The Live2D Student Discount Program allows students and faculty at educational institutions (elementary/middle/high/vocational schools, universities, etc.) to receive a 76% OFF coupon for a 3-year Live2D Cubism Editor PRO subscription.

To apply for the Student Discount, you will need to provide proof of eligibility via a student email address, credentials etc.

You can apply by entering your email address in the empty form via the English page below:

Team introduction

We are Workshop A in the KuGou Live-Cool Dimension Team.

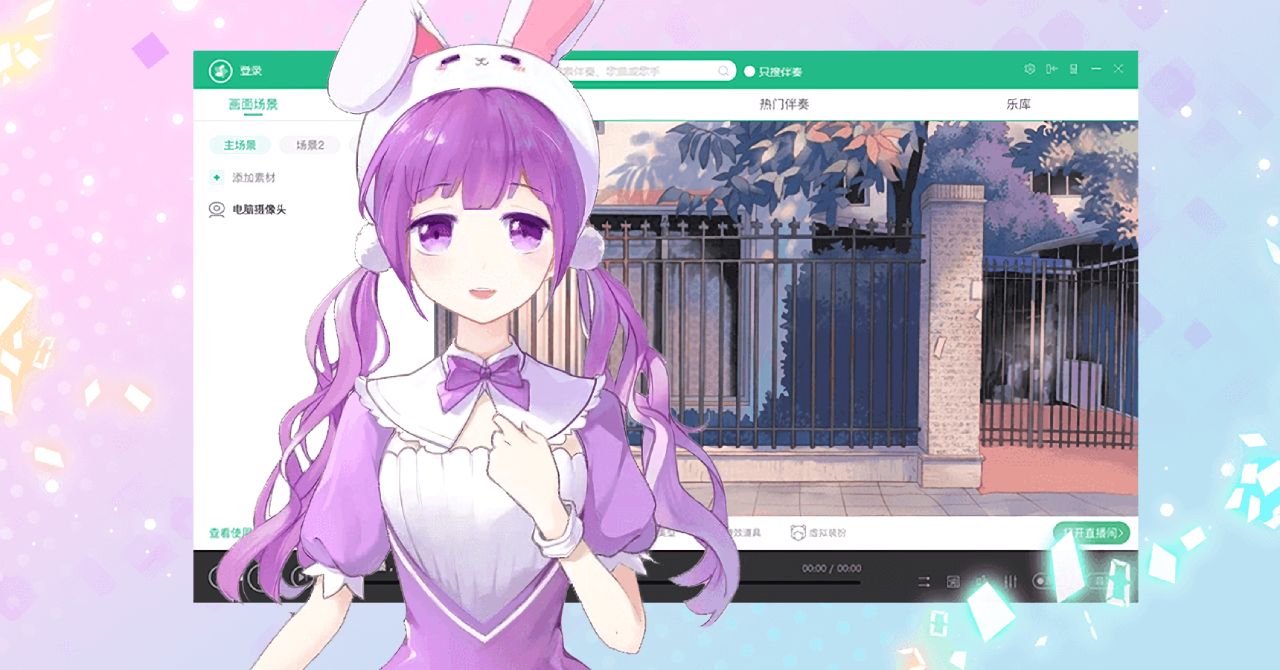

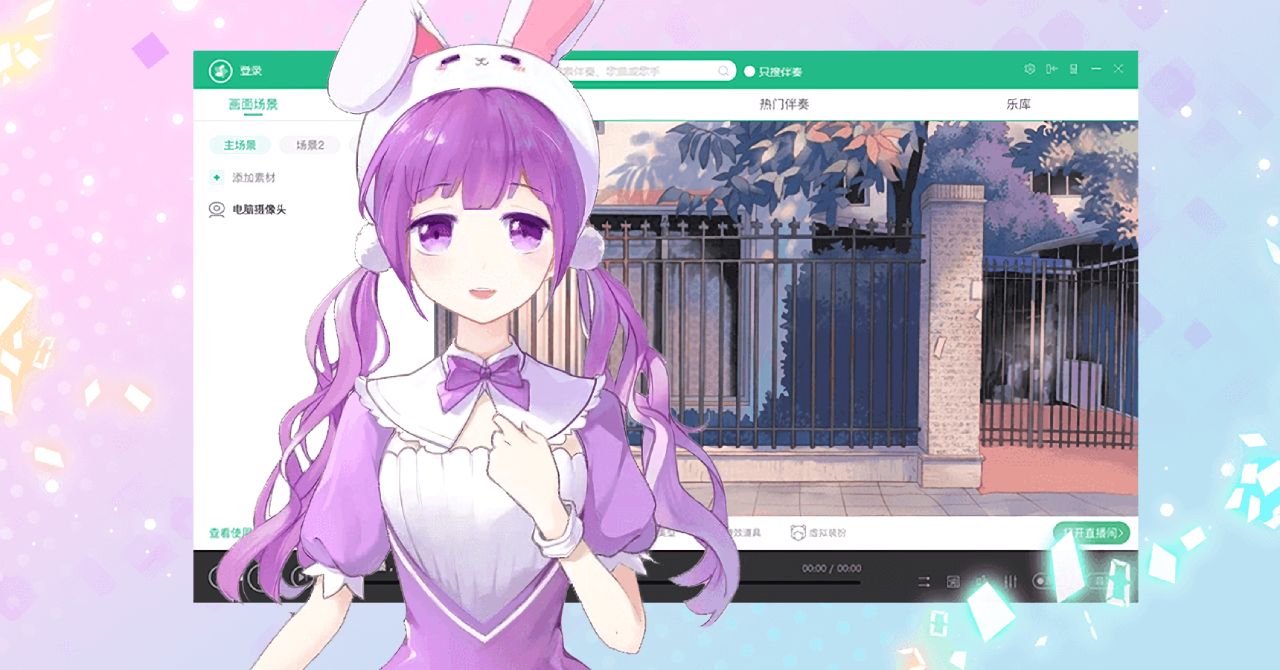

Cool Dimension is a virtual live streaming app for younger people who prefer to live in a two-dimensional world. Users can enjoy real-time communication with any virtual YouTuber—or “VTuber”—they choose while streaming live. Our team members each have their own unique skills and include live streaming technology pros and devotees of 2D culture. With the cooperation of the Virtual Live Streaming Technology and Product Operations Team, we would like to take this opportunity to introduce some of the fascinating appeals of Cool Dimension.

— Why did you decide to use Live2D for this project?

Thanks to extremely detailed expressions and cost-effectiveness, Live2D is the perfect choice for VTuber production.

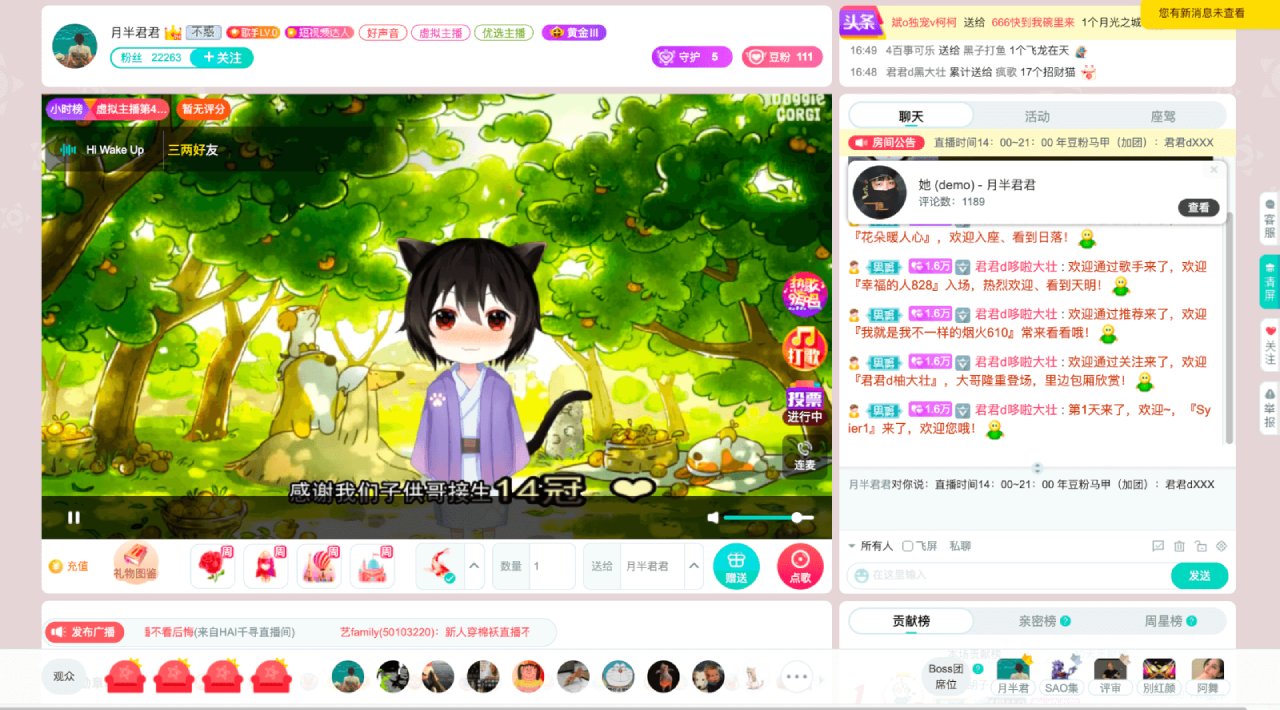

For Cool Dimension, we used a wide range of techniques to create a variety of virtual characters.

For example, we wanted to broaden the scope of VTuber expression styles such as with 3D modeling and facial capturing, and Live2D was essential for those tasks. Live2D allowed us to dynamically animate virtual idols, each with a highly unique style, and to expand the range of options available to users watching the VTuber.

The hand-drawn style, very similar to 2D animation, is something only Live2D can provide, and it is a perfect match for the 2D images many 2D fans expect. Live2D is also capable of rendering realistic and highly detailed virtual character reproductions, and we look forward to releasing even more Live2D virtual idols in the future.

— I am very happy to hear that you were impressed by Live2D’s greatest feature. Did you encounter any problems with adopting Live2D?

The facial movements of Live2D virtual characters play an extremely important role in how the person behind the character communicates directly with fans during live streaming. Creating those facial movements required a lot of research to create the algorithms that drive the movements.

Facial information such as the eyes, mouth, eyebrows, and direction of the face is calculated to match information from key facial points. This allows us to match the facial information of the person behind the character with that of the character to produce the expressions of one on the other. We repeatedly tweaked the algorithms and parameters to produce smooth movements before we chose the methods that produced the best results.

— Did you run into any issues or problems while using Live2D?

When the person behind the character uses Live2D, it is necessary to import the materials for the virtual character. To make it easier for users to import virtual characters, we felt the need to establish standards that prescribe the format of data to be imported, or possibly the ability to create official virtual materials packages for users.

— What can you tell us about the future plans for the project and the team?

We are planning to develop virtual communities in the future. These will be populated by virtual idols, and users can add virtual characters they themselves create to the communities. We want to provide support so that a greater number of creators can enjoy producing virtual content beyond just live streaming, text, videos, and pictures.

— In closing, do you have any messages for the readers?

In the future, as Live2D supports more basic character movements such as looking backwards, we look forward to expanding the range of interactions with virtual characters even further.

— Thank you for your time today!